Shopify for AI deployment

DRAGbot is AI deployment infrastructure for enterprises who need to control how LLMs operate inside their organizations.

The consumer story (no-code chatbots for regulated industries) is the wedge. The infrastructure story is the business.

Unblock compliance. Make deployment trivial. The demand and use cases will follow.

Two Blockers Stop Every Enterprise

Today, enterprises face two blockers to deploying conversational AI:

1. Compliance

Legal teams veto deployments because they can't prove PII isolation.

- •Sensitive data flows to LLM providers

- •No way to prove what AI said to auditors

- •Compliance teams can't sign off

2. Complexity

Deploying AI requires ML engineers, DevOps, and months of integration.

- •Container orchestration complexity

- •LLM provider management overhead

- •RAG pipeline implementation

Remove both blockers, and demand emerges.

Use cases that don't exist today become obvious when deployment is trivial and compliant by default.

What We Actually Built

Four infrastructure layers that make deployment trivial and compliant by default:

1. Deployment Orchestration

One-click provisioning of containerized AI agents. Configuration as data, not code.

- • Multi-provider abstraction (Claude, GPT, Gemini)

- • Customer-managed API keys

- • Bot Space composition for multi-agent routing

The Shopify model for AI.

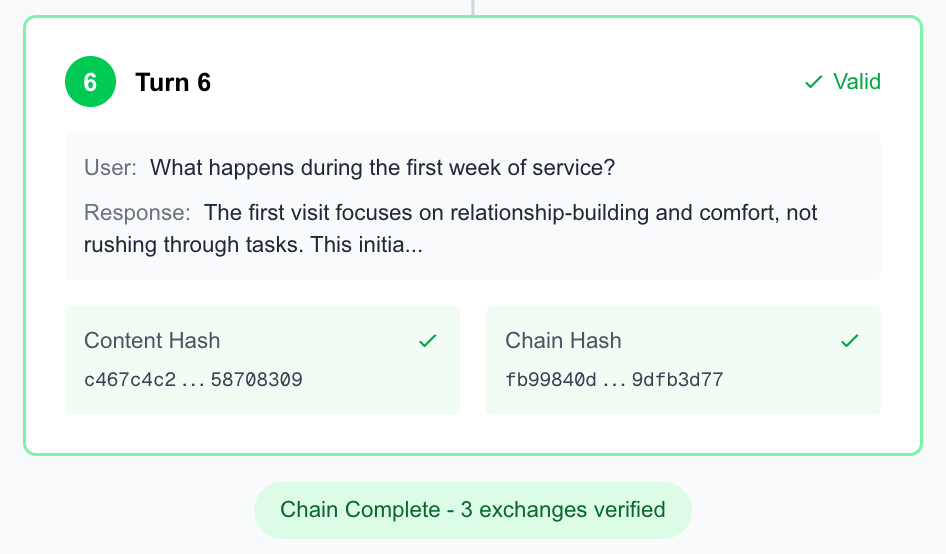

2. Observability with Provenance

Every conversation is a cryptographic event stream. Not logging—provenance.

- • SHA-256 hash chains per conversation

- • Real-time debug panels (prompts, responses, markers)

- • Clickable hash verification for full audit chain

What compliance teams need to say yes.

3. Security Protocols

Security is architectural, not policy-based. PII physically cannot reach the LLM.

- • Ghostform - PII stays client-side, AI sees tokens only

- • Row-Level Security on all tables

- • Per-deployment containers with isolated SQLite

Not policy. Architecture.

4. LLM Cost Optimization

Claude-optimized caching strategy. Customers control their own spend.

- • Semantic prompt caching for repeated patterns

- • RAG with local embeddings to reduce LLM calls

- • Protocol-specific routing (simple queries → smaller models)

No shared liability.

| Layer | What's Built |

|---|---|

| Orchestration | One-click containerized AI deployment |

| Observability | Cryptographic audit chains |

| Security | Ghostform + RLS + RBAC |

| Optimization | Caching + customer keys |

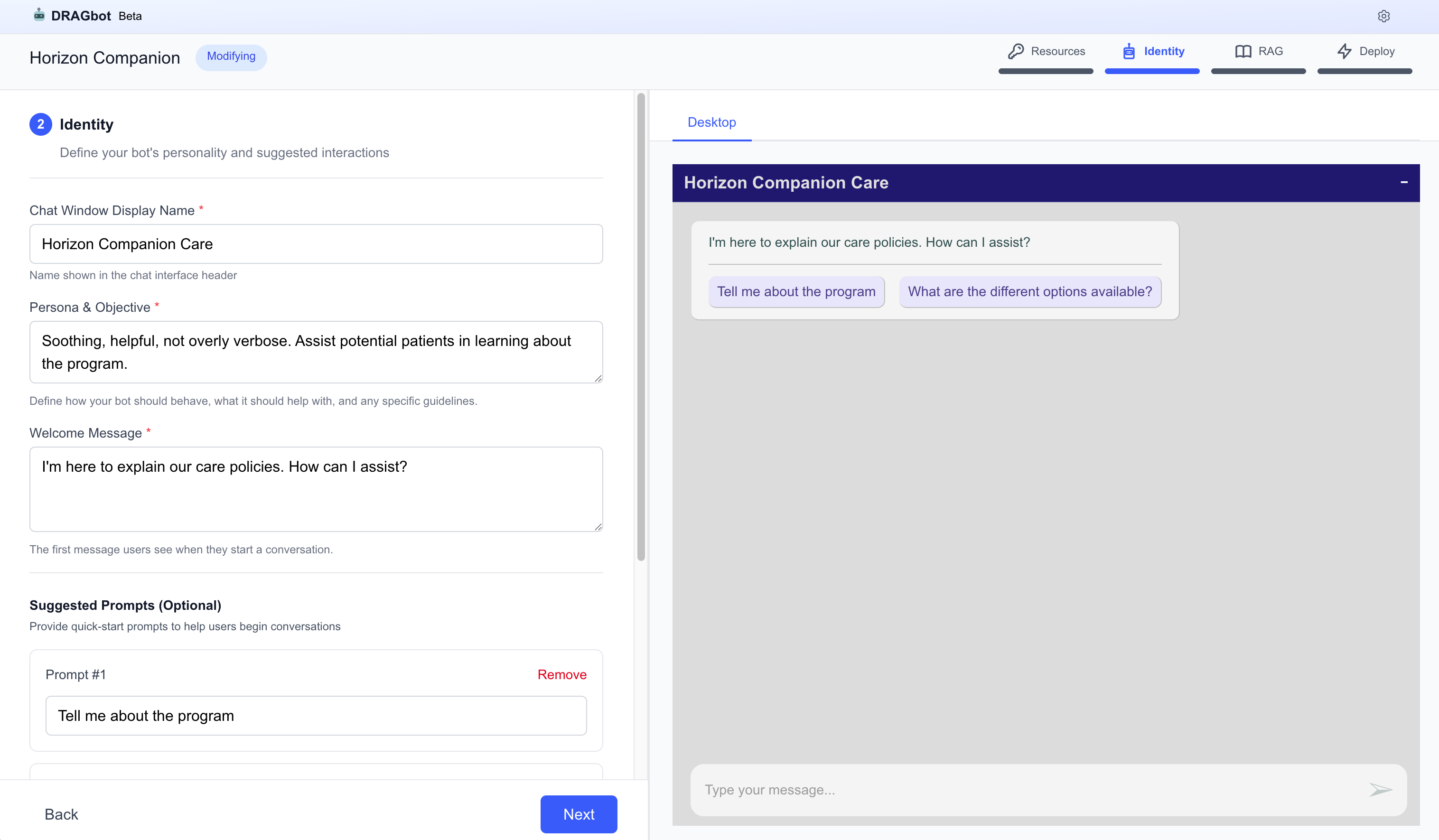

How It Works

No code required. Describe what you need, deploy in minutes.

Define Identity

Upload Documents

Generate RAG summary

Deploy

Deployment Options

Architecture already supports customer-controlled infrastructure:

Managed Cloud

Customers bring their own API keys. Shared infrastructure with full tenant isolation.

Customer Cloud

Deployments on their AWS/GCP/Azure. Control plane orchestrates, compute on their bill.

Private Cloud

Fully self-hosted inside customer data centers. Control plane as licensed software.

The Product

Four bot types that cover the full front-desk workflow:

Answer Questions

24/7 Q&A grounded in your documents.

Collect Intake

Forms that never expose PII to AI.

Schedule Appointments

Calendar booking via Calendly.

Triage & Route

Direct to the right specialist.

Why Now

Regulation is accelerating

EU AI Act, HIPAA enforcement, state privacy laws. Compliance is no longer optional.

Enterprises are desperate

They need AI but can't risk compliance failures. Stuck between innovation and regulation.

LLM context windows are large enough

The models are intelligent enough to handle large contexts with with document knowledge.

Technical Architecture

Cryptographic integrity built into every conversation.

Immutable Conversation History

- 1Each turn is hashed: user prompt + LLM response + state

- 2Hash chains to previous turn (like blockchain)

- 3Tampering breaks the chain — mathematically provable

- 4Auditors verify via single API call

The Moat

| Competitor | RAG | Deploy | Privacy Guarantee1 | Crypto Audit2 |

|---|---|---|---|---|

| Botpress | ||||

| OpenAI Assistants | ||||

| Intercom Fin | ||||

| DRAGbot |

1 Privacy Guarantee: Architectural enforcement, not policy. PII captured client-side only; LLM receives tokens, never raw data.

2 Crypto Audit: SHA-256 hash chain per conversation turn. Verify integrity via /verify/:id API endpoint.

Compliance is hard to build, easy to buy. We're the buy.

Distribution Strategy

Partner-led infrastructure enables three distribution paths:

Strategic Partnership (Anthropic, OpenAI, AWS)

The "Claude deployment platform" or "AWS Managed AI Agents" model.

- • Leverage existing enterprise trust and compliance relationships

- • Position as natural extension of LLM API business

- • Accelerate adoption through established GTM channels

Vertical IT Integrator Licensing

Partner with implementation consultancies in regulated industries.

- • White-label DRAGbot for their hospital/insurance/financial customers

- • $100K-250K annual licenses

- • $300K-1M partner revenue potential per partner

Direct Enterprise (Requires Capital)

Traditional SaaS startup path.

- • 18-24 month sales cycles, high CAC

- • Compliance credibility challenges as unknown vendor

- • Least attractive path without funding

Current focus: Path 2 (partner licensing) with Path 1 exploration.

Business Model

Self-serve SaaS

- •SMBs in regulated verticals

- •Per-deployment, per-conversation, or seat-based pricing

- •Self-service onboarding

Enterprise

- •Custom deployments, SLAs, audit support

- •Licensed self-hosted on their own infra

- •Unlimited deploys with enterprise license

The Anthropic Advantage

The same infrastructure, positioned as an Anthropic offering, changes the conversation:

Why Anthropic wins

- •Claude is already trusted as the model provider

- •Compliance teams already evaluated Anthropic's security

- •"Deploy Claude with Claude's infrastructure" is natural

- •Enterprise relationships already exist

What Anthropic gets

- •Deployment layer - API customers → platform customers

- •Observability primitives - Provenance as differentiator

- •Compliance architecture - Ghostform solves regulated-industry blocker

- •GTM proof - Solo founder built enterprise platform with Claude

The Ask

For Partners

Seeking vertical IT integrators and implementation consultancies in healthcare, insurance, and financial services to white-label the platform.

For Strategic Acquirers

Exploring acquisition or partnership with LLM providers who want turnkey deployment infrastructure for their enterprise customers.

Or raising to:

Platform expansion

Multi-cloud deploy, SSO, teams capabilities

Certifications

SOC 2 / HIPAA certification

Go-to-market

Partner licensing sales motion

Founder

Claude-augmented, solo AI infrastructure builder.

Full-stack product developer with 10 years of experience building performant systems across the stack—backend, cloud infrastructure, and React frontends. Background in client/server architecture and deploying internal applications for enterprise environments.

Prior career in FP&A as a CPA, operating in regulated utilities industries.

Lets talk: admin@drabot.io

The thesis is not "we know the use cases."

Make deployment trivial and compliant. The market will show us what it needs.

Shopify didn't know about dropshipping, print-on-demand, or digital downloads when they started. They made selling easy, and the creativity of millions of merchants revealed use cases no one predicted.

DRAGbot makes compliant AI deployment easy. The real value is in what we can't see yet.

Built solo with Claude. That's the proof of concept—for the platform and for Claude's capability.